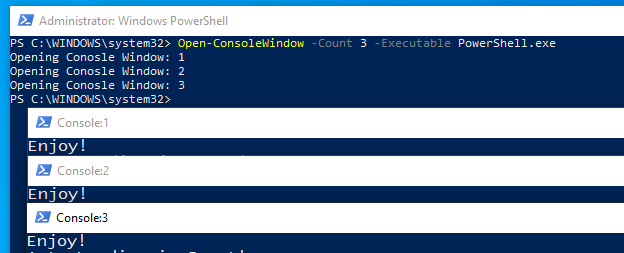

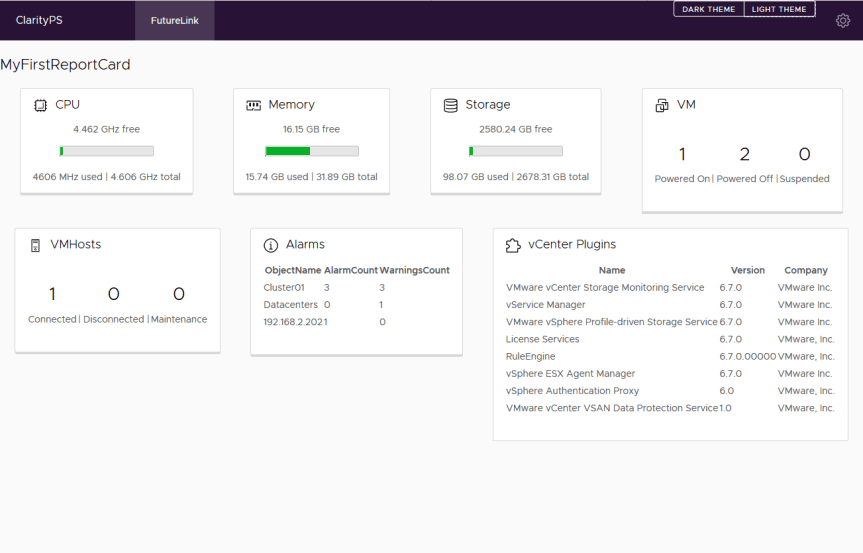

Finally! I’ve updated the ReportCardPS module to be more user friendly. I had some free time while attending the PowerShell and Devops Summit this past week. This module creates ‘Card’ based reports in HTML, using the VMware Clarity HTML/JavaScript library.

I think I need to add some text the the icon above…

In order to separate ‘form and function’ I have create a second module to address the VMware Clarity piece. This way you could plug in any HTML/JavaScript solution to ReportCardPS. But I’m kinda biased with VMware Clarity….

For the most part, unless you are building customized reports, you can forget about ClarityPS. Just know it exists, and that I still have quite a few more elements to expose to the PowerShell functions.

To get started, install ReportCardPS (This will auto-install Clarity PS:

Install-Module ReportCardPS

Review the available commands:

Get-Command -Module ReportCardPS

Get-ReportCardTemplateSet – This function can check directories for Json Files that can be used as a report template.

New-ReportCard – Ingests a JSON file and loops through the data to create the Cards within the report

If you run ‘Get-ReportCardTemplateSet’ with no parameters, it will return the default template files included with the module.

Get-ReportCardTemplateSet

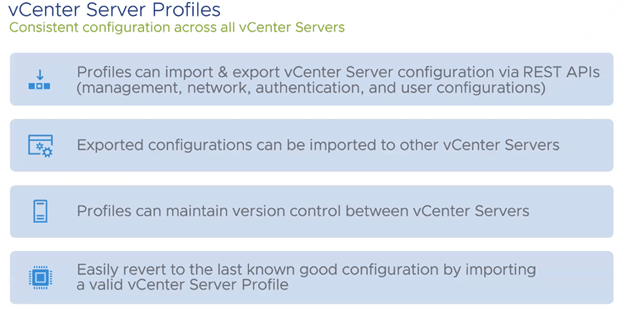

The current template file is named ‘vCenterHomeReport.json’ and provides the HTML file above. It’s as close as I could get to the vCenter Home page.

Here is a Link to the JSON file used to create the report

When you are ready, Connect to your vCenter:

Connect-VIServer 192.168.2.220 -User administrator -Password VMware1!

Then you are ready to run your first report. Keep in mind this is still a new module, and there are no current output options. Please feel free to submit a request!

New-ReportCard -Title MyFirstReportCard -JsonFilePath "C:\Program Files\WindowsPowerShell\Modules\ReportCardPS\0.0.8\lib\VMware\vCenterHomeReport.json" | Out-File C:\temp\reportcardps\test.html -Encoding ascii

There you have it! Oh, it also comes with a built in theme switcher! If you like that kinda thing….

All docs are updated for both modules. Enjoy!

ReportCardPS

ClarityPS